What It Really Means for Software Development

What Is Vibe Coding?

The term was coined by Andrej Karpathy in early 2025 to describe a mode of software development where the developer describes intent in natural language and delegates the actual writing, refactoring, and debugging to an AI model. Instead of typing every line, you describe what you want, review the output, and iterate. The AI writes. You steer.

In practice, this means using tools like Cursor, Claude Code, GitHub Copilot, or OpenAI Codex to build entire features from a conversation. The developer’s role shifts from writing code to orchestrating it.

By 2026, adoption moved well beyond experimentation. Engineering teams are resolving real bugs, performing database migrations, and building complete APIs with minimal manual intervention. The productivity gains are real and measurable.

How the Market Is Using It Today

Adoption has been faster than most predicted. The JetBrains State of Developer Ecosystem 2025, based on responses from 24,534 developers across 194 countries, found that 85% of developers now regularly use AI tools for coding and development, and 62% rely on at least one AI coding assistant, agent, or AI-powered editor. The tools have moved from novelty to infrastructure.

Developers use AI to generate boilerplate, write unit tests, explain unfamiliar codebases, suggest refactors, and accelerate the parts of the job that are repetitive and well-defined. The more ambiguous and architectural the problem, the less AI contributes without strong human guidance.

At the enterprise level, the conversation has shifted from “should we use this?” to “how do we use this responsibly?” Teams are building internal guidelines around which tools are approved, what code can be sent to external APIs, and how AI-generated code gets reviewed before it reaches production. Organizations that haven’t had this conversation yet are taking on risks they may not fully understand.

How Big Tech Is Using It

The clearest signal that vibe coding crossed from experimentation to production came in February 2026, during Spotify’s Q4 earnings call.

Spotify co-CEO Gustav Söderström stated that the company’s best developers had not written a single line of code since December. The system behind this shift is called Honk, an internal AI development environment built on Claude Code. Instead of manually debugging or building features, Spotify’s top engineers now direct AI agents via Slack that write, test, and deploy code in real time. As Söderström described it: an engineer on their morning commute can tell Claude via Slack to fix a bug or add a feature, receive a new version of the app on their phone, and merge it to production before arriving at the office.

Spotify attributed significant productivity gains to Honk, shipping more than 50 new features and updates to its streaming app in 2025 alone. Spotify serves hundreds of millions of users across multiple platforms and regions, with a complex technology stack that makes this a meaningful reference point. The announcement was made on a regulated earnings call, in front of investors, with named systems and specific timelines.

An internal Meta document obtained by Business Insider in March 2026 shows specific AI adoption targets across different engineering organizations. Meta’s creation org, responsible for building and maintaining core creative experiences, set a goal for the first half of 2026 that 65% of engineers should write more than 75% of their committed code using AI. The company’s Scalable ML team had a February 2026 target of 50% to 80% AI-assisted code. Companywide, Meta set a Q4 2025 goal for 55% of software engineers’ code changes across central product organizations to be agent-assisted, alongside 80% adoption of general AI tools among mid to senior-level engineers. A Meta spokesperson confirmed to Business Insider that the company’s performance program is focused on rewarding impact from AI tools, not just usage.

The question for most engineering organizations is no longer whether to adopt AI coding tools. It is how to do it with appropriate governance, quality controls, and strategic intent.

The Benchmark Question: How Do We Actually Measure This?

Before discussing which models to use, it’s worth understanding how they’re evaluated, because the numbers that appear in announcements don’t always mean what they seem.

The dominant benchmark for AI software engineering today is SWE-bench, introduced by Princeton researchers in 2024. The setup is straightforward: given a real GitHub issue and the full codebase of a popular Python repository, the model must generate a patch that resolves the issue and passes the associated unit tests. No hints about which files to edit. No simplified toy problems. Real repositories like Django, scikit-learn, and matplotlib.

When SWE-bench launched, the best available model, Claude 2, resolved just 1.96% of issues. By early 2026, the leading models exceed 70% on the most widely cited variant, SWE-bench Verified. That trajectory tells an important story about the pace of progress.

There is, however, a significant caveat. SWE-bench Verified, the 500-task version validated by human experts and used in most public leaderboards, is likely contaminated. In machine learning, contamination means that the evaluation data appeared in the model’s training data. When that happens, the model isn’t solving a problem it has never seen before. It may simply be recalling a solution it was exposed to during training. The benchmark stops measuring reasoning ability and starts measuring memorization. OpenAI audited their own models and found that every frontier model tested could reproduce verbatim solutions for certain tasks in SWE-bench Verified, which strongly suggests this is happening. As a result, OpenAI stopped reporting Verified scores entirely and recommends SWE-bench Pro as the more reliable reference.

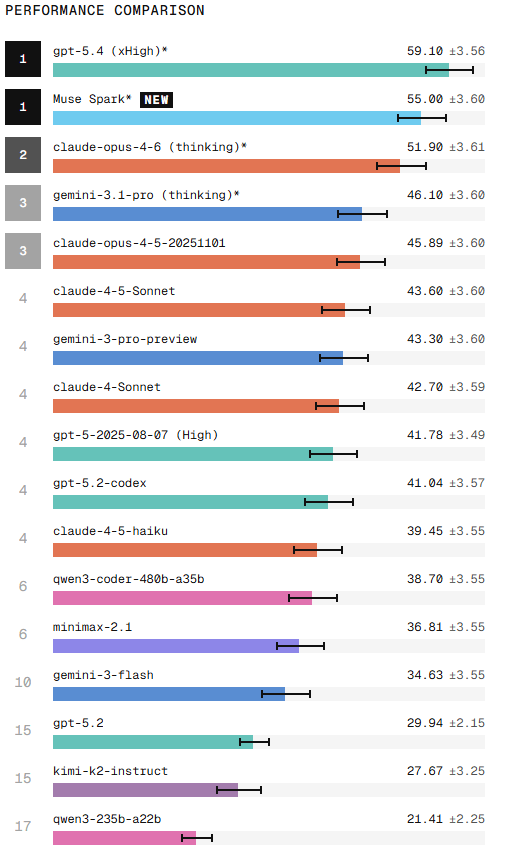

SWE-bench Pro, developed by Scale AI, uses repositories under GPL licenses to reduce contamination risk, includes tasks across multiple languages and not just Python, and requires edits of at least 10 lines, filtering out the trivial one-line fixes that inflate Verified scores. On this benchmark, scores drop dramatically. The same Claude Opus 4.5 that scores 76.8% on Verified scores 45.9% on Pro under standardized conditions.

The practical implication is simple: when a vendor announces a benchmark score, the first question should always be which variant. Scores on Verified and Pro describe very different levels of real-world capability.

Leading models in 2026

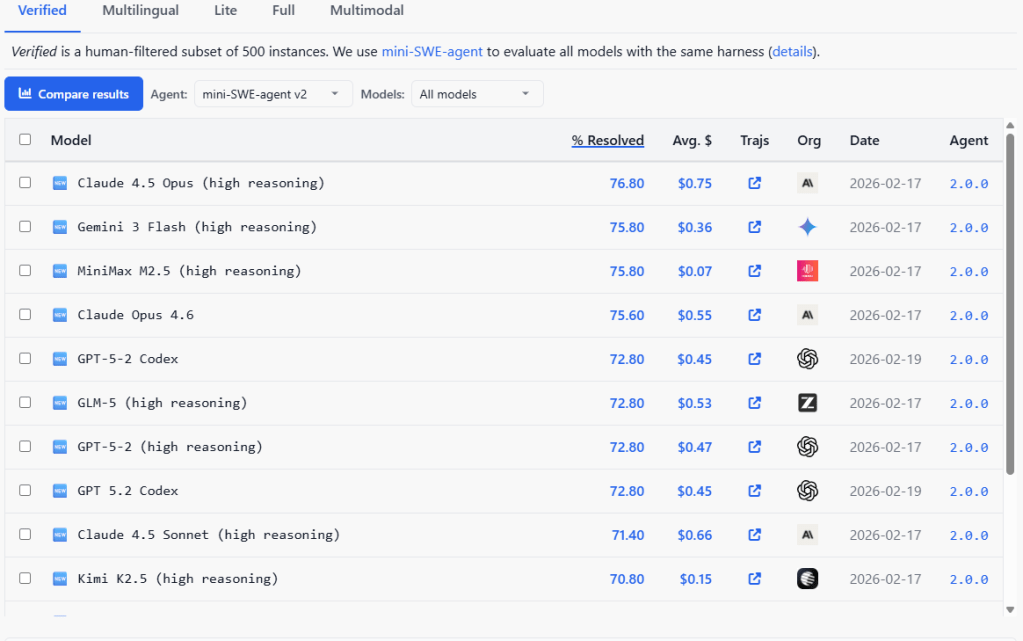

The scores below reflect the SWE-bench Verified leaderboard as of April 20, 2026. All models were evaluated using mini-SWE-agent v2, a minimal bash-only agent implemented in under 100 lines of Python, developed by the SWE-bench team specifically to eliminate scaffold differences between models. Every model gets the same simple setup: a bash terminal and nothing else. No proprietary tools, no special context management, no optimizations. This is what makes the comparison meaningful, and also why these numbers are lower than the scores vendors report using their own optimized agent systems. The same model evaluated with Claude Code or Codex typically scores 5-15 points higher than with mini-SWE-agent v2. These numbers change frequently as new models are submitted. For the latest rankings, check swebench.com directly.

On SWE-bench Verified, Claude 4.5 Opus leads at 76.8% at an average cost of $0.75 per task.Gemini 3 Flash and MiniMax M2.5 follow tied at 75.8%, with a notable difference: MiniMax costs$0.07 per task versus $0.36 for Gemini. Claude Opus 4.6 reaches 75.6%, and GPT-5.2 Codex,GLM-5, and GPT-5.2 all land at 72.8%. Claude 4.5 Sonnet reaches 71.4% and Kimi K2.5 closes thetop 10 at 70.8% at $0.15 per task.

https://labs.scale.com/leaderboard/swe_bench_pro_public

On SWE-bench Pro, GPT-5.4 leads at 59.1%, followed by Claude Opus 4.6 with thinking at 51.9%,Gemini 3.1 Pro at 46.1%, and Claude Opus 4.5 at 45.9%. The remaining models score between27% and 43%.

An important caveat when reading these two benchmarks together: the model versions are notalways the same. MiniMax M2.5 appears in Verified while MiniMax M2.1 was evaluated in Pro.Kimi K2.5 appears in Verified while Kimi K2 appears in Pro. Evaluations were run at differenttimes with different scaffolds. Direct comparisons should be made carefully.

Why benchmark scores should be read with caution

Benchmarks have three structural limitations worth understanding before using them to make decisions.

The contamination problem. AI models are trained on enormous amounts of text from the internet. SWE-bench Verified was published in 2024, which means its 500 tasks, the GitHub issues, the codebases, the correct solutions, have almost certainly appeared in the training data of every model released since then. When a model encounters a task it has seen before, it is not solving a new problem. It is recalling a solution. The benchmark stops measuring reasoning ability and starts measuring memory. OpenAI confirmed this when they audited their own models and found that frontier models could reproduce verbatim solutions for certain Verified tasks. That is why they stopped reporting Verified scores.

SWE-bench Pro was designed to address this. It uses repositories under GPL licenses, which creates legal barriers against including the code in commercial training datasets, and draws from private proprietary codebases that models could not have seen. The result is a benchmark that is harder to game, and significantly harder in absolute terms. Models that score 70-76% on Verified score 27-59% on Pro. The gap between those two numbers is, in large part, the contamination effect.

The agent system problem. A benchmark score does not measure a model in isolation. It measures a model plus the complete system around it: how the model receives the task, which tools it can use, how many attempts it gets, how it navigates files, and how it decides when it is done. The same underlying model can score 23% with a basic setup and 59% with an optimized one. When vendors report benchmark scores using their own proprietary setups, and most do, the numbers are not directly comparable. You are comparing different systems, not different models. SWE-bench Pro addresses this partially by using a standardized setup across all models, which is why its leaderboard is a more controlled comparison.

The metric problem. The benchmark only measures whether the generated code passes predefined tests. A patch that passes the tests but introduces a security vulnerability, adds technical debt, or breaks conventions the tests don’t cover scores the same as a clean, well-reviewed solution. The number tells you nothing about code quality, maintainability, or security.

The trajectory from 1.96% in 2024 to 70%+ in 2026 reflects real progress in model capability. Benchmarks provide a useful general picture of how models compare. But a single number should never be the primary basis for a model selection decision. The most reliable evaluation is running your own tasks, on your own codebase, with your own definition of what a good solution looks like.

Why Claude and Codex Feel Like First Division in Practice

If the benchmarks are converging, why do developers consistently report that Claude and Codexfeel qualitatively different for real development work?

Three reasons stand out.

The first is how these models handle ambiguous intent. Vibe coding prompts are rarely precisetechnical specifications. You describe what you want in natural language, often incompletely,and expect the model to infer the rest. Claude handles vague, underspecified prompts betterthan most alternatives. Other models, including those with comparable benchmark scores, tendto require more explicit instruction to reach the same output.

The second is context window size and coherence. Claude Code operates with a 1 million tokencontext window. In a greenfield project that grows over hours of iteration, maintaining coherenceacross the entire codebase requires holding a lot in working memory. Models with 128k-256kcontext windows hit limits that are noticeable in practice.

The third is ecosystem maturity. The volume of community documentation, examples, promptengineering guides, and troubleshooting resources around Claude and Codex is orders ofmagnitude larger than for newer alternatives.

This doesn’t mean newer models aren’t capable. It means the complete system, includingtooling, documentation, and community, is part of what you’re evaluating.

Security Problems

AI coding tools introduce two categories of security risk that deserve serious attention from any organization using them in production.

The first is vulnerability propagation in generated code. AI models generate code that compiles and passes tests, but passing tests is not the same as being secure. A December 2025 analysis byCodeRabbit of 470 real-world open source pull requests found that AI-generated code averaged 1.7x more issues per PR than human-written code. Security vulnerabilities were 1.5 to 2x more frequent, and performance inefficiencies appeared 8x more often. The model doesn’t know your threat model. It optimizes for code that works, not code that is safe.

A developer who understands security can review generated code critically. A developer who cannot is shipping vulnerabilities at AI speed. The productivity gains of vibe coding can become a liability if code review practices don’t evolve alongside adoption.

The second risk is more structural and less discussed. These tools have access to your entire development environment. When you use Claude Code, Codex, or Cursor in agent mode, the tool reads your files, your environment variables, your configuration, and potentially your credentials. That context is sent to external APIs operated by Anthropic, OpenAI, or Microsoft. For most consumer applications this is acceptable. For organizations working with proprietary

codebases, regulated data, or sensitive infrastructure, this is a significant exposure that requires explicit policy decisions, not assumptions.

The question is not whether these companies are trustworthy. The question is whether you rorganization’s security posture and compliance obligations permit sending that context outside your network boundary.

The Impact on Developer Roles and the Junior Pipeline

The productivity gains from AI coding tools are real, and the market is adjusting accordingly. Companies are hiring fewer junior developers, expecting existing engineers to cover moreground with AI assistance, and concentrating demand at senior levels where judgment and architecture matter more than raw output. A survey by Hired.com found that job postings requiring experience with AI coding tools increased by 340% between January 2025 andJanuary 2026, while postings for pure implementation roles declined by 17%.

This creates a structural problem that the industry hasn’t resolved. If junior developers aren’tbeing hired at scale, how does the next generation of senior developers form?

Senior engineering expertise is not simply accumulated knowledge. It is pattern recognition built through years of debugging, shipping, maintaining, and breaking things in production. That experience has to come from somewhere. AI tools can accelerate certain parts of the learning curve, but they cannot substitute for the judgment that develops through direct exposure to failure and consequence.

The developer who relies on AI to generate code without understanding what it generates is not developing that judgment. They are building a dependency. When the AI produces something subtly wrong, and it does, regularly, the developer without deep understanding of the underlying systems cannot catch it.

The Education Question

If a student today learns to code primarily through AI assistance, a legitimate question emerges: are they learning to program, or learning to prompt?

Understanding how systems behave, why they fail, and how to reason about complexity requires direct exposure to failure. You build that understanding by writing bad code, debugging problems that don’t have obvious solutions, and dealing with the consequences of architectural decisions over time. AI tools can short-circuit that process. A student who generates a working solution without understanding it has not learned to solve the problem. They have learned to delegate it.

This creates real challenges for educators. How do you teach algorithmic thinking when the algorithm can be generated on demand? How do you evaluate understanding when the AI can produce the answer? How do you build the foundation of knowledge that enables systematicthinking about software at scale?

There are no clean answers. The most likely outcome is that baseline expectations for software developers shift, with less emphasis on syntax and boilerplate, and more on architecture, security, and evaluation of AI output.

Leave a comment